For some time now, smartphone manufacturers have been increasing technological innovations in the field of photography. SuperSpectrum, HDR Plus, Dual Aperture, Dual Pixel, what's behind these marketing terms and how are manufacturers increasingly approaching the quality of real cameras? We explain everything to you in our file on the photo on your smartphone.

From left to right: Galaxy S10, Pixel 3 and Huawei P30 Pro

While the most powerful chips equip some mid-range smartphones, that the longest autonomy is sometimes held by entry-level devices or that design innovations are also made on smartphones at 500 euros, it is is the photo that has become the differentiating factor of the most high-end smartphones.

To do this, the manufacturers of smartphones have put the small dishes in the big ones. Not only did they take the techniques of traditional digital photography, as we find it at Canon, Nikon or Panasonic, but they also went even further. Due to the small size of photo sensors on smartphones, digital photography has been enriched with many technological innovations to compensate for this inherent flaw even in smartphones. We detail all this in our file.

How does digital photography work?

Digital photography largely uses the same ideas as film photography, at least for capturing light and therefore information, and adjusting exposure.

The more light a digital camera records, the more exposure the photo will be. Conversely, the less light it captures, the darker the shot will be. So far, nothing but very logical.

To best manage light, digital cameras use four distinct settings: sensor size, focal aperture, shutter speed, and ISO sensitivity. Depending on the type of shot that will be taken, we will modify more or less this or that parameter in order to adapt the photo that we want to take not only to the ambient light, but also to the action on the screen and the type of shot (portrait, landscape, macro, etc.).

Sensor size

The sensor of a camera is not measured in number of megapixels, far from it. One of the most important data is actually its physical size. The larger the sensor, the larger the surface that can capture light. In photography, devices with the largest sensors, called full frame, are therefore often the most expensive.

The Sony Alpha 7 III is equipped with a 24 × 36 mm sensor called "full frame"

Focal aperture

This is the opening of the diaphragm, the membrane that retracts or opens inside the lens to let light through. The aperture is defined in f / x, with f being the focal length and x the diameter of the pupil, that is to say the space left by the diaphragm to let in the light. So be careful when reading a data sheet, the larger the opening, the smaller the number behind the f. So f / 16 is a very small aperture, while f / 1,4 will be a large aperture.

The aperture used here, f / 16, is a very small aperture, letting little light into the sensor.

The aperture is particularly useful, in conjunction with the size of the sensor, to play with the background blur. The larger the aperture - and therefore the more light will be recorded - the more the background behind the subject being photographed will be blurred. This is a parameter that can be interesting to highlight on portraits for example as can be seen in the photos below, taken with a Panasonic Lumix GX7. The first is taken with an aperture of f / 16 and the second with an aperture of f / 1,7:

Shutter speed

As its name suggests, the shutter speed corresponds to the time during which the sensor will record the image to be captured. The higher the speed, the less data the sensor can record and therefore the darker the photo. Conversely, a slow speed will allow the image to be recorded sometimes for several seconds and therefore capture much more light.

Shutter speed can play an important role in blur. Indeed, if you hold your camera in your hand, and you want to capture moving subjects, it will be better to go through a fast speed, otherwise the subject may be blurry, having moved during the capture. On the other side, if you want to capture streaks of lighthouse light on a bridge over a freeway in the middle of the night, you might want to put your camera on a tripod and keep a slow speed.

ISO sensitivity

The sensitivity comes from the silver films used before digital photography. Roughly speaking, this is an artificial saturation of lightness, allowing your device to increase the brightness without actually having captured more light. It is measured in ISO with often the lowest sensitivities at ISO 100 and the highest at over ISO 100.

This is often the last setting to change when you shoot, if the others aren't enough. Indeed, by increasing the sensitivity, the risk is to record digital noise, namely chromatic aberrations which are characterized for example by blue, green or red points in a sky which is supposed to be black.

Smartphones, more limited by their small sensor

Now that you understand the principles of camera exposure, you can forget about them and switch to smartphone photography.

Indeed, while we find sensors of 13,2 × 8,8 mm (1 inch), 17,3 × 13 mm (micro 4/3), 23,6 × 15,8 mm (APS-C) or 36 × 24 mm (full frame) on cameras, this is far from the case on smartphones. For example, the Honor View 20 only integrates a 6,4 × 4,8 mm main sensor. And this is one of the widest camera sensors on the market, also used on Xiaomi's Mi 9.

Le Xiaomi Mi 9

With such small sensors, it is therefore necessarily more complicated to record as much light than with a hybrid, SLR, or expert compact camera. If the sensitivity or the shutter speed remain coherent criteria on smartphone - which you can often modify in pro photo mode - it is much less the case of the aperture.

Indicating openings without specifying the size of the photo sensor is of little interest

As we have seen, the opening depends above all on the size of the sensor. The larger the sensor, the more effects a change in the aperture will have. Therefore, indicating apertures of f / 2,2, f2,0, f / 1,8, or even f / 1,5, often without even specifying the size of the photo sensor, is of little interest. We could also see it with the test of the Galaxy S9 last year, one of the first smartphones to offer a variable aperture, the change of aperture from f / 2,4 to f / 1,5 n ' ultimately has only a tiny impact on the final exposure of the shot.

All this without even mentioning the fact that the vast majority of current smartphones, with the notable exception of the Samsung Galaxy S and Note since the S9, only offer a fixed aperture, since the diaphragm is fixed in the photo module.

How do manufacturers overcome these shortcomings

Among smartphone manufacturers, it was necessary to make with this constraint smaller sensors than those of digital cameras. It would be technically possible to offer larger sensors on a smartphone - Panasonic did it with its Lumix DMC-CM1 equipped with a one-inch sensor - but the bulkiness is clearly felt.

Le Panasonic Lumix DMC-CM1

Also in the past, HTC was one of the first manufacturers to communicate not on the number of megapixels of its sensors, but on the size of the photosites, ie of each cell responsible for capturing a pixel. With the HTC One, in 2013, the Taiwanese manufacturer highlighted the size of 2 microns of each photosite, larger and therefore capable of recording more light.

Smartphone photography today brings many more technologies than traditional digital camera photography

A trend that did not last, the Galaxy S10 offering, for example, photosites of 1,4 μm when the Huawei P30 Pro is satisfied with photosites of 1 μm.

However, since smartphone manufacturers are limited by sensor size constraints, they are forced to be more innovative. In many ways, the smartphone photo today brings much more technology than the photo on traditional digital devices.

Sensor modifications to record more light

To allow sensors of the same size to capture more light, manufacturers have added some features, or some tips on camera sensors.

Monochrome sensors

The first of these is the use of a monochrome grayscale sensor, in addition to RGB color sensors (red / green / blue). This is a trick that has been used in particular by Huawei on the P9, Mate 9, P10 and Mate 10 Pro, but also by Essential on the PH-1 or by HMD on the recent Nokia 9 PureView.

Three of the five sensors of the Nokia 9 PureView are monochrome

The advantage of these monochrome sensors is that they do not have to worry about the colors to be recorded and therefore they can focus only on the light and on the details. At Huawei, monochrome sensors were thus of greater definition than color sensors and made it possible, for example, to offer a hybrid zoom while maintaining an excellent level of detail. For its part, HMD Global explains that a monochrome sensor can record three times more light than an RGB sensor, and that the use of 3 monochrome sensors and 2 color sensors on the Nokia 9 PureView can capture 10 times more light than a single RGB sensor.

The RYB color sensor

But monochrome sensors aren't their only tricks used by builders. For its new P30 and P30 Pro, Huawei has decided to use a new type of photo sensor, called SuperSpectrum, which will record not red, green and blue lights, but red, yellow and blue. We thus go from an RGB sensor to an RYB sensor.

According to the information given by Huawei, this choice of sensor would allow to record up to 40% more light than on the sensor of the P20 Pro. Yellow photosites are therefore capable of recording both red light and green light. To then distinguish yellow light from green light, Huawei trusts artificial intelligence. We will come back to that.

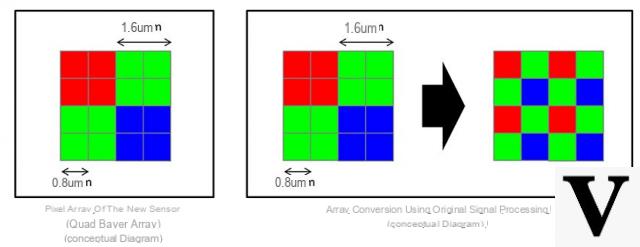

Le pixel binning

Finally, the other technology used to improve the brightness of the sensors is that of pixel binning. The idea is in fact to offer sensors with a very large number of megapixels, like the Sony IMX586 sensor found on the Honor View 20 or the Xiaomi Mi 9 with a total of 48 megapixels. However, in default mode, the shots that emerge from the sensor are four times smaller, only 12 megapixels.

Sony's IMX586 sensor will combine pixels together to make them brighter

What happened in the meantime? It's very simple. In fact, the photosites of the sensor, theoretically responsible for recording one pixel each, were assembled by four. By assembling the four recorded pixels to make only one, the smartphone will then analyze what is the recorded average color and erase the chromatic aberrations of each pixel. A good way not only to record four times more light for the same pixel and to cancel the digital noise which can result from a high rise in ISO sensitivity.

Portrait mode and depth of field management

Due to the small size of smartphone photo sensors, and negligible variations in the focal aperture, the depth of field is necessarily high natively on a smartphone. Concretely, this means that without processing, it would be physically impossible to offer nice background blur for photos in portrait mode, allowing the face of the person photographed to be detached, as is the case on an SLR for example.

Fortunately, the treatments exist, thanks to three possibilities.

Portrait mode with two sensors

To know where the subject to keep in focus is and which area to blur, you still need to know the depth of the scene. This can be calculated by the smartphone using a second sensor. Thus, by analyzing the two images positioned at two different places on the back, it will be possible for the smartphone to assess the difference between the two images and therefore to partially reconstruct the scene in 3 D. The device will then be able to understand what the subject is in focus, what the focus was on, and what areas are out of focus. This is for example what the second sensor on the back of the OnePlus 6T is used for.

In addition, Google's Pixel 3 and Pixel 3 XL, which only use a single sensor, achieve the same result. To do this, the firm uses a technology that it qualifies as “dual pixel”. To put it simply, each Pixel 3 photosite is actually split into two photosites, one on the left and one on the right. This allows the smartphone to record two very slightly offset images, to analyze the depth of the scene using parallax, and to highlight the subject.

The portrait mode of the Google Pixel 3 XL

Portrait mode with a single sensor

A slightly less reliable method of offering portrait mode with a single sensor is to use simple algorithms. This is often the case with front cameras, for selfies.

Manufacturers who offer this feature are in fact only relying on data from a single sensor. It is therefore up to the processor and the image rendering to analyze the captured photograph to highlight the subject and integrate blur all around. Obviously, because of less information, in particular on the depth, the result is often less precise and it often happens that the trimming is random, in particular at the level of the hair or the glasses. In addition, when you want to use portrait mode - or aperture - on objects, some smartphones like the iPhone XR can prevent it, since this mode only works on faces.

Portrait mode with a ToF sensor

One of the latest innovations in the world of smartphone photography is that of the ToF sensor, called “time of flight”. Concretely, it is about a sensor which will analyze the speed at which an emitted light signal will take to be transcribed in the camera. By analyzing the different measurements for different points, the sensor will make it possible to establish a 3D cartography of the scene and therefore to analyze the depth with precision.

Le mode portrait you Huawei P30 Pro

Therefore, the camera which uses an additional ToF sensor will be able to offer a particularly precise portrait mode, including for the most complex areas, as is the case on the Huawei P30 Pro.

Image processing and machine learning

We have already seen it previously, but in recent years, many smartphone manufacturers have had only one expression in their mouths to talk about photos: artificial intelligence. At Honor, for example, it even goes so far as to include the words "AI Camera" on the back of certain smartphones such as the Honor 8X.

Le Honor 8X

Concretely, there are several forms of AI. It can be a simple scene recognition, which will therefore make the automatic mode even more automatic by adjusting the parameters depending on whether you are taking a portrait, a photo of food or a landscape. At Huawei and Honor, the IA mode thus makes it possible to increase, for example, the blue of the sky when you take a landscape photo in order to make it stand out even more in the image, or to accentuate the green of the vegetation. At Samsung, it is thanks to scene recognition and therefore to IA mode that we will be able to switch to night mode on the Galaxy S10.

However, it can happen that it is an even more advanced artificial intelligence, with machine learning. This is the case for example on the Pixel 3 from Google. Google's servers host billions of images and the Pixel 3 is able, thanks to these images, to partially reconstruct what can be expected from a photo. This is how the Pixel 3's Super Res Zoom is able to artificially increase the level of detail of a shot while maintaining a natural appearance, since it was based to do this on millions of images from the same type.

Multiple shots for better dynamic range

In photography, dynamic range is the difference between the brightest areas of an image and the darkest areas. On a device with a low dynamic range, it will be impossible to have both the blue sky and the colors of the buildings illuminated correctly at the same time: either the sky will be all white, as if burnt by light, or the buildings. will be black, drowned out by the shadows.

Photo in HDR mode taken with the OnePlus 6T

Fortunately, to increase this dynamic range, manufacturers have integrated HDR (high dynamic range, or high dynamic range) modes on their cameras. It is a technique resulting directly from digital photography. Concretely, you just need to take several shots at several different exposures, then stitch them together in image editing software to ensure that all areas of the photo will be exposed correctly. In the example of our landscape photo for example, the sky will be blue in the least exposed photo and the building will be well lit in the most exposed photo. By combining the two photos, we get the perfect photo.

On smartphones, HDR is all the more interesting because it is integrated as standard on smartphones. No need to go through photo editing software, the rendering is calculated natively by the smartphone's processor.

Typically, smartphones will take several shots in a row, each with a different exposure. Problem, the rendering can sometimes be blurry, the camera can move from one shot to another, just like the subjects of the photo. To remedy this, HMD Global has integrated five cameras that all take the same photo at the same time on its Nokia 9 PureView.

Night mode, an HDR +++++++++ mode

In recent years, manufacturers have started to integrate a night mode into the photo application of smartphones. It is found for example on the Huawei Mate 20, P30 or P30 Pro, but also Xiaomi, OnePlus or especially Google with its Pixels.

A photo of the Galaxy S9 in low light

Concretely, several technologies can be used for this night shot mode. At Samsung, for example, for the “Super Low Light” mode of the Galaxy S9, the smartphone would simply go up to ISO sensitivity and capture 12 different images. The smartphone then took care of comparing them to identify places where digital noise could appear. All he had to do was remove the artifacts that only appeared in one shot and not the 11 others, then combine them all to produce a low-light photo.

At Huawei, night mode is more like a boosted HDR mode. The smartphone will open the shutter for several seconds to capture as much light as possible and therefore information. Thanks to the software AIS stabilization, the smartphone is then able to analyze the movement of your hand and therefore cancel the blur during image processing. It is thus not necessary to use a tripod or to put down the smartphone as is the case with OnePlus for example. The same goes for moving subjects, with the smartphone managing to keep only the first still image of people.

First photo: what I see

Second photo: what the # Pixel3 sees in night mode pic.twitter.com/G2nyDmPGBa

- Ulrich Rozier? (@UlrichRozier) March 13, 2019

Finally, on the side of Google Pixel smartphones, again the Night Sight mode. is similar to an HDR mode. The idea is to capture multiple images at different exposures depending on the movement and brightness of the scene, and then combine them not only to cancel digital noise, but also to remove blur from the final shot. Finally, using machine learning, the smartphone will be able to automatically do the white balance by referring to images of the same type.

Zooming with fixed focal length lenses

For two years, manufacturers have multiplied the sensors on the back of smartphones, but also optics. If we have seen that this multiplication of cameras could be used to capture more light thanks to monochrome sensors, or to offer a portrait mode, one of the most recent uses is the integration of optical zooms.

Manufacturers can indeed offer zooms qualified as x2, x3, x5 or even x10 as is the case on the Huawei P30 Pro. Be careful, however: as it stands, this figure does not mean much.

A zoom is defined as the difference between the shortest focal length and the longest. In concrete terms, the shorter the focal length, the greater the viewing angle. Conversely, a long focal length will allow you to have a weaker field of vision and therefore to see further.

The vast majority of photos taken with a smartphone are wide-angle

Today, the vast majority - if not all - of smartphones on the market offer a main camera with a wide-angle lens, equivalent to 24 to 28 mm. This wide-angle is qualified as such because it offers a focal length shorter than the 50 mm, the focal length defined as standard. Please note: the wide-angle should not be confused with the ultra-wide-angle which we are talking about a little below.

In addition to this wide-angle lens, we will see the appearance of optics described as "zoom" x2, x3 or x5. This is often a zoom depending on the focal length of the main lens. Thus, on the Samsung Galaxy S10, the x2 optical zoom is in fact an optical one with an equivalent focal length of 52 mm.

The same goes for the ultra wide-angle lenses that can be found on the Xiaomi Mi 9, the Huawei P30 Pro (photos above) or the Galaxy S10. As the name suggests, these are optics with an even greater angle - and therefore a shorter focal length - than on the main cameras. Thus, the ultra wide angle of the Galaxy S10 allows you to go down to a focal length equivalent to 13 mm, while that of the P30 is limited to 16 mm. The first thus offers a field of vision of 120 ° while the second allows a field of vision of 107 °.

Overall, with very rare exceptions - like the Samsung Galaxy S4 Zoom - there is no true progressive optical zoom on smartphones. The optical zoom level is stepped, when switching from one lens to another. Everything else is a hybrid zoom. Between the wide-angle and the telephoto, the images will for example be calculated by the smartphone to define the sharpness using the data from the two sensors.

What are the best smartphones for photography in 2021?

What are the best smartphones for photography in 2021?

For many, the photo part is very important when it comes to choosing a new phone. Which are doing the best? Here is our selection of the best photophones.