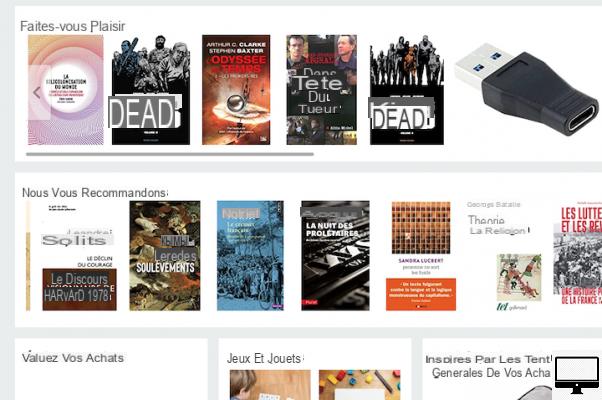

When you buy something online, when you watch a movie on an SVOD platform, when you listen to a song on a music streaming app: without you necessarily being aware of it, algorithms accompany you throughout your journey to the within the cultural offer. And influence you in your purchases or your cultural choices.

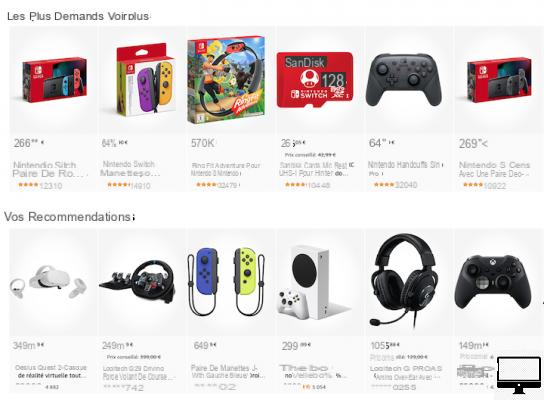

Whether on Amazon, Spotify, Netflix or Steam (the ASNS), recommendation engines will lead you to popular, viewed and shared content. With the starting postulate that faced with an overabundance of choices, it can be useful to seek advice from tools that "filter" the content for us.

- Netflix, Disney+, Stadia, Facebook... too many choices, killing the choice?

Faced with the paralysis and stress caused by the immensity of the Spotify or Netflix catalogs, the algorithms reassure you, and save you time. Knowing that time is also money: it is no coincidence that Amazon's recommendation system is the foundation of its business model. According to the e-commerce platform, for whom the time spent making choices is wasted consumption time, 30% of the pages viewed on its site come from its algorithmic suggestions.

Even stronger at Netflix, which boasts that 75% of the content viewed by its customers comes from a personalized recommendation from its algorithm. And the best part is that users themselves are happy to be influenced by algorithms: according to a study conducted in 2015 by the CNIL, 9 out of 10 users of streaming services consider that their data is used to improve the service. And 65% listen to the algorithm's recommendations. The rate rises to 68% among users of video services such as YouTube or Netflix.

Prisoners of our digital doubles

The criticisms that target the "filter bubbles" created by the algorithms do not only concern Facebook: those of the ASNS also lock us into some of our tastes, and ultimately prevent us from "leaving our cultural zone of confidence". should know that these famous algorithms, which are only sets of calculation rules used to solve math problems, are used here to constantly filter, when we are online, pieces of information (films, music, books, images, web pages) To do this, they use two resources: the Internet user's browsing behavior (search keywords, history of use, consumption behavior) and collaborative, social recommendations, which are based on the "like" user behavior.

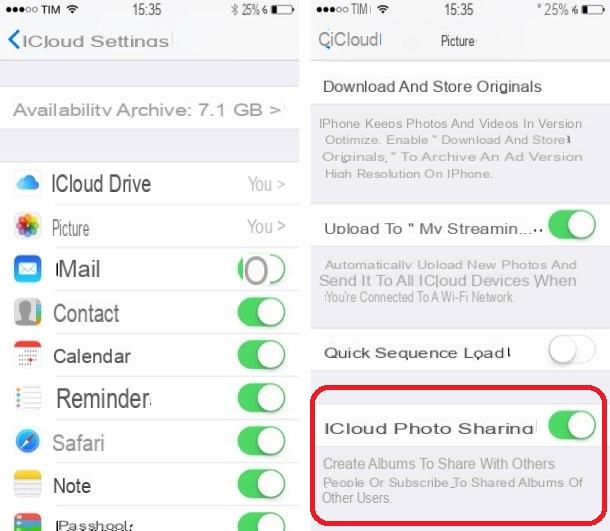

All these data, once mixed, form "personal cultural data"; they reflect your choices, and thanks to AI, they allow recommendation systems to adapt to your tastes (which may change). Netflix, for example, uses algorithms to change the thumbnails of films or series displayed on its home page, depending on the subscriber's usage history: for the same film, the poster used can thus resembling that of a thriller, a horror film, a romance, or a comedy. Which means that when you're on Netflix thinking you're making your own selection, your sense of having a choice or being able to choose "by chance" is just an illusion. You are actually a prisoner of your digital double, which has locked you in your cultural filter bubble.

Our tastes are manipulable…

But recommendation tools are not only capable of influencing our choices: they can also modify our tastes, in substance. This is the conclusion of a behavioral economics experiment, published in MIT Sloan in 2020, titled "The Side Effects of Recommender Systems". A team of American researchers asked 169 students (and avid music consumers) to listen to the first 30 seconds of dozens of songs.

Each of them was given a score between 1 and 5 stars. Students were told that these scores were calculated from their past preferences. While in reality, this notation was generated in a completely random way. At the end of the test, the researchers asked them if they were ready to buy songs, and which ones, to listen to them completely. Result: per additional rating point, the willingness to pay of guinea pigs increased from 12% to 17%. And yet, these scores in no way reflected their preferences.

Consumers prefer the system to tell them what they would likeConcretely, we therefore guided them, making them believe that they already liked such and such a song, and in the end, their desire to buy was much higher. Guinea pigs feeling almost "forced" to like a piece of music when a recommendation tool (here doctored) tells them so. "Consumers don't just prefer what they've experienced and know they like; they prefer what the system has told them they'd like. This is surprising, because consumers shouldn't need a system to tell them how much they enjoyed a song they just heard. The advent of recommender systems can cause us to question our own tastes. We will move from the question 'Do I 'love it ?' to the question 'Should I like this?'", write the researchers.

For the authors of the study, this experience shows the "dark side" of recommendation engines: the existence of potential decision biases, introduced by the algorithms. And the capacity for these systems to manipulate our preferences, our tastes, without our really being aware of it. "After all, the details behind recommendation algorithms are far from transparent. Flawed recommendation engines that inaccurately estimate consumers' true preferences risk lowering willingness to pay for certain items and increase for others, regardless of the likelihood of an actual match.

This can encourage less ethical organizations to artificially inflate the recommendations", they write. And beyond "direct manipulation" from false reviews, there is also the risk of "random error". algorithms can be wrong and make exaggerated evaluations; by overestimating the value of a product, or on the contrary by underestimating it, they can encourage a consumer to buy something that he would never have bought otherwise (but he will be disappointed, at the very end), or "divert" it from content that it could otherwise have consumed.

…Because they depend on others

So should we be worried and afraid of being manipulated when we go to Netflix, Amazon or Spotify? A professor of social psychology, Stéphane Laurens, refutes the idea of such a "danger of manipulation" by algorithms: according to him, the influence of recommendation engines is in fact a phenomenon as old as commerce. What is automated by algorithms today was once done by humans: "Salespeople advise their customers with phrases like 'it'll look good on you'; advertisements say 'you love it...' and feature people delighted by the product. The stars linked to the products are the counterpart of these advices on the sites, advices elaborated from clues given by profiles, purchases or consultations. These are similar to the clues that we give to see a salesman when we walk into his shop and talk to him for a few moments," he explains. In short, recommender systems are just the high-tech version of certain sales people, those who are so good that they are able, through their advice, to make you buy things you don't like, and to weigh on your tastes. Human sellers who also develop their recommendations from the clues we leave them, from our habits, and from what other customers buy who they believe are like us.

We are lulled into the illusion of making our own choices autonomously and objectivelyBut Stéphane Laurens goes further: for him, it is not simply our tastes for products that can be manipulated, but "the idea that we have of ourselves". He bases this on the research of the psychologist Hazel Markus on the "self-scheme", a concept which suggests that individuals construct self-schemes of themselves, representations that we use to describe ourselves ( I'm outgoing, I'm sincere, I'm creative). According to Markus, if an individual is given fictitious information about their personality, information that is very different from what the person thinks of themselves (for example, I am introverted, hypocritical and Cartesian), and that he is told that it comes from the results of a serious clinical and scientific test, it will significantly influence the way he will perceive himself. "The recommendation engine says you'll enjoy this piece, the test says you're an independent person... and people don't disregard this information: our opinions and tastes are not just the fruit of our own experiences, they depend on what others tell us about ourselves and the objects around us", notes Stéphane Laurens.

Finally, what all these studies show us is not that we are constantly manipulated; but that we are lulled into the illusion of making our own choices autonomously and objectively. "Taking this illusion as a starting point, such results seem to show that as soon as we provide a recommendation or the opinion of others, the individual abandons his initial judgment if he had one, does not analyze reality and changes to follow the recommendation or advice. This amounts to seeing unstable judgments, individuals who do not hold their positions, who change for no reason... They then appear as imbecile consumers whose time has been skilfully captured brain available to manipulate them", concludes the researcher in social psychology. This does not mean that we are necessarily manipulable sheep, but simply that we are deeply social beings, and that our judgments are unstable, algorithms or not.

Recreate randomness

But how, then, can we get out of our cultural bubbles, free ourselves from this illusion of free will, and reduce all these biases? Sociologist Dominique Cardon, author of the book “What algorithms dream of”, suggests that we could introduce the human element into the editorial proposals of streaming platforms, for example. Or, improve everyone's understanding of computer mechanisms, and the ability to "return to manual". In other words, learn to "lift the hood", and explain how the algorithms work.

But we could also recreate randomness, real randomness; like the “Random Shopper”, designed by developer Darius Kazemi. A bot that (actually) makes a random selection, for a given budget, on Amazon. This is also what Forgotify offers, a site that is dedicated only to songs never listened to on Spotify. It uses a program that allows you to listen to songs on this platform that have only ever been heard by their creators. To get out of the algorithmic bubbles and no longer allow ourselves to be influenced, it would thus be possible to unearth unknown content, little listened to / watched / read. By immersing ourselves in what some call the "lonely web" - or "lonely web". But that's another story, which I'll tell you about next week, in another article.